Wonder about your world…

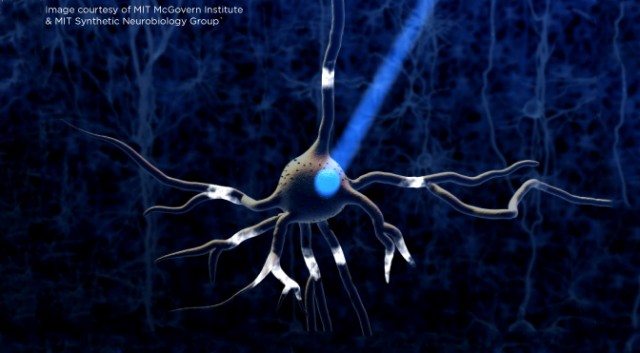

Take the plunge down the rabbit hole of insanity and wonder in this fast-paced, nonstop psychological thriller that will leave you questioning the very nature of reality and beyond. Part thriller, part romance, part existential horror, A Dream of Waking Life delves into lucid dreaming, psychedelics, existential ontology, video games, the nature of love, the nature of reality, and more.

Mendel’s Ladder delivers an adrenaline-fueled journey set on a dystopian future Earth, brimming with high-stakes action, adventure, and mystery. This epic series opener plunges readers into a world filled with diverse cultures, heart-pounding battles, and characters who will captivate your heart and imagination.

“E.S. Fein is raising the bar for quality as it’s a very well-written and thought-provoking book…There are points and themes in the story that could be discussed for eons as people will have their own idea on where it leads. It’s a book I would highly recommend.” – Andy Whitaker, SFCrowsnest